Downstream pipelines (FREE)

A downstream pipeline is any GitLab CI/CD pipeline triggered by another pipeline. A downstream pipeline can be either:

- A parent-child pipeline, which is a downstream pipeline triggered in the same project as the first pipeline.

- A multi-project pipeline, which is a downstream pipeline triggered in a different project than the first pipeline.

Parent-child pipelines and multi-project pipelines can sometimes be used for similar purposes, but there are some key differences.

Parent-child pipelines:

- Run under the same project, ref, and commit SHA as the parent pipeline.

- Affect the overall status of the ref the pipeline runs against. For example,

if a pipeline fails for the main branch, it's common to say that "main is broken".

The status of child pipelines don't directly affect the status of the ref, unless the child

pipeline is triggered with

strategy:depend. - Are automatically canceled if the pipeline is configured with

interruptiblewhen a new pipeline is created for the same ref. - Display only the parent pipelines in the pipeline index page. Child pipelines are visible when visiting their parent pipeline's page.

- Are limited to 2 levels of nesting. A parent pipeline can trigger multiple child pipelines,

and those child pipeline can trigger multiple child pipelines (

A -> B -> C).

Multi-project pipelines:

- Are triggered from another pipeline, but the upstream (triggering) pipeline does not have much control over the downstream (triggered) pipeline. However, it can choose the ref of the downstream pipeline, and pass CI/CD variables to it.

- Affect the overall status of the ref of the project it runs in, but does not

affect the status of the triggering pipeline's ref, unless it was triggered with

strategy:depend. - Are not automatically canceled in the downstream project when using

interruptibleif a new pipeline runs for the same ref in the upstream pipeline. They can be automatically canceled if a new pipeline is triggered for the same ref on the downstream project. - Multi-project pipelines are standalone pipelines because they are normal pipelines that happened to be triggered by an external project. They are all visible on the pipeline index page.

- Are independent, so there are no nesting limits.

Multi-project pipelines

Moved to GitLab Free in 12.8.

You can set up GitLab CI/CD across multiple projects, so that a pipeline in one project can trigger a downstream pipeline in another project. You can visualize the entire pipeline in one place, including all cross-project interdependencies.

For example, you might deploy your web application from three different projects in GitLab. Each project has its own build, test, and deploy process. With multi-project pipelines you can visualize the entire pipeline, including all build and test stages for all three projects.

For an overview, see the Multi-project pipelines demo.

Multi-project pipelines are also useful for larger products that require cross-project interdependencies, like those with a microservices architecture. Learn more in the Cross-project Pipeline Triggering and Visualization demo at GitLab@learn, in the Continuous Integration section.

If you trigger a pipeline in a downstream private project, on the upstream project's pipelines page, you can view:

- The name of the project.

- The status of the pipeline.

If you have a public project that can trigger downstream pipelines in a private project, make sure there are no confidentiality problems.

Trigger a multi-project pipeline from a job in your .gitlab-ci.yml file

Moved to GitLab Free in 12.8.

When you use the trigger keyword to create a multi-project

pipeline in your .gitlab-ci.yml file, you create what is called a trigger job. For example:

rspec:

stage: test

script: bundle exec rspec

staging:

variables:

ENVIRONMENT: staging

stage: deploy

trigger: my/deploymentIn this example, after the rspec job succeeds in the test stage,

the staging trigger job starts. The initial status of this

job is pending.

GitLab then creates a downstream pipeline in the

my/deployment project and, as soon as the pipeline is created, the

staging job succeeds. The full path to the project is my/deployment.

You can view the status for the pipeline, or you can display the downstream pipeline's status instead.

The user that creates the upstream pipeline must be able to create pipelines in the

downstream project (my/deployment) too. If the downstream project is not found,

or the user does not have permission to create a pipeline there,

the staging job is marked as failed.

Specify a downstream pipeline branch

You can specify a branch name for the downstream pipeline to use. GitLab uses the commit on the head of the branch to create the downstream pipeline.

rspec:

stage: test

script: bundle exec rspec

staging:

stage: deploy

trigger:

project: my/deployment

branch: stable-11-2Use:

- The

projectkeyword to specify the full path to a downstream project. In GitLab 15.3 and later, variable expansion is supported. - The

branchkeyword to specify the name of a branch in the project specified byproject. In GitLab 12.4 and later, variable expansion is supported.

Pipelines triggered on a protected branch in a downstream project use the role of the user that ran the trigger job in the upstream project. If the user does not have permission to run CI/CD pipelines against the protected branch, the pipeline fails. See pipeline security for protected branches.

Use rules or only/except with multi-project pipelines

You can use CI/CD variables or the rules keyword to

control job behavior for multi-project pipelines. When a

downstream pipeline is triggered with the trigger keyword,

the value of the $CI_PIPELINE_SOURCE predefined variable

is pipeline for all its jobs.

If you use only/except to control job behavior, use the

pipelines keyword.

Trigger a multi-project pipeline by using the API

Moved to GitLab Free in 12.4.

When you use the CI_JOB_TOKEN to trigger pipelines,

GitLab recognizes the source of the job token. The pipelines become related,

so you can visualize their relationships on pipeline graphs.

These relationships are displayed in the pipeline graph by showing inbound and outbound connections for upstream and downstream pipeline dependencies.

When using:

- CI/CD variables or

rulesto control job behavior, the value of the$CI_PIPELINE_SOURCEpredefined variable ispipelinefor multi-project pipeline triggered through the API withCI_JOB_TOKEN. -

only/exceptto control job behavior, use thepipelineskeyword.

Parent-child pipelines

Introduced in GitLab 12.7.

As pipelines grow more complex, a few related problems start to emerge:

- The staged structure, where all steps in a stage must be completed before the first job in next stage begins, causes arbitrary waits, slowing things down.

- Configuration for the single global pipeline becomes very long and complicated, making it hard to manage.

- Imports with

includeincrease the complexity of the configuration, and create the potential for namespace collisions where jobs are unintentionally duplicated. - Pipeline UX can become unwieldy with so many jobs and stages to work with.

Additionally, sometimes the behavior of a pipeline needs to be more dynamic. The ability to choose to start sub-pipelines (or not) is a powerful ability, especially if the YAML is dynamically generated.

Similarly to multi-project pipelines, a pipeline can trigger a set of concurrently running downstream child pipelines, but in the same project:

- Child pipelines still execute each of their jobs according to a stage sequence, but would be free to continue forward through their stages without waiting for unrelated jobs in the parent pipeline to finish.

- The configuration is split up into smaller child pipeline configurations. Each child pipeline contains only relevant steps which are easier to understand. This reduces the cognitive load to understand the overall configuration.

- Imports are done at the child pipeline level, reducing the likelihood of collisions.

Child pipelines work well with other GitLab CI/CD features:

- Use

rules: changesto trigger pipelines only when certain files change. This is useful for monorepos, for example. - Since the parent pipeline in

.gitlab-ci.ymland the child pipeline run as normal pipelines, they can have their own behaviors and sequencing in relation to triggers.

See the trigger keyword documentation for full details on how to

include the child pipeline configuration.

For an overview, see Parent-Child Pipelines feature demo.

NOTE: The artifact containing the generated YAML file must not be larger than 5MB.

Trigger a parent-child pipeline

The simplest case is triggering a child pipeline using a local YAML file to define the pipeline configuration. In this case, the parent pipeline triggers the child pipeline, and continues without waiting:

microservice_a:

trigger:

include: path/to/microservice_a.ymlYou can include multiple files when defining a child pipeline. The child pipeline's configuration is composed of all configuration files merged together:

microservice_a:

trigger:

include:

- local: path/to/microservice_a.yml

- template: Security/SAST.gitlab-ci.ymlIn GitLab 13.5 and later,

you can use include:file to trigger child pipelines

with a configuration file in a different project:

microservice_a:

trigger:

include:

- project: 'my-group/my-pipeline-library'

ref: 'main'

file: '/path/to/child-pipeline.yml'The maximum number of entries that are accepted for trigger:include is three.

Merge request child pipelines

To trigger a child pipeline as a merge request pipeline we need to:

- Set the trigger job to run on merge requests:

# parent .gitlab-ci.yml

microservice_a:

trigger:

include: path/to/microservice_a.yml

rules:

- if: $CI_MERGE_REQUEST_ID-

Configure the child pipeline by either:

-

Setting all jobs in the child pipeline to evaluate in the context of a merge request:

# child path/to/microservice_a.yml workflow: rules: - if: $CI_MERGE_REQUEST_ID job1: script: ... job2: script: ... -

Alternatively, setting the rule per job. For example, to create only

job1in the context of merge request pipelines:# child path/to/microservice_a.yml job1: script: ... rules: - if: $CI_MERGE_REQUEST_ID job2: script: ...

-

Dynamic child pipelines

Introduced in GitLab 12.9.

Instead of running a child pipeline from a static YAML file, you can define a job that runs your own script to generate a YAML file, which is then used to trigger a child pipeline.

This technique can be very powerful in generating pipelines targeting content that changed or to build a matrix of targets and architectures.

For an overview, see Create child pipelines using dynamically generated configurations.

We also have an example project using

Dynamic Child Pipelines with Jsonnet

which shows how to use a data templating language to generate your .gitlab-ci.yml at runtime.

You could use a similar process for other templating languages like

Dhall or ytt.

The artifact path is parsed by GitLab, not the runner, so the path must match the

syntax for the OS running GitLab. If GitLab is running on Linux but using a Windows

runner for testing, the path separator for the trigger job would be /. Other CI/CD

configuration for jobs, like scripts, that use the Windows runner would use \.

For example, to trigger a child pipeline from a dynamically generated configuration file:

generate-config:

stage: build

script: generate-ci-config > generated-config.yml

artifacts:

paths:

- generated-config.yml

child-pipeline:

stage: test

trigger:

include:

- artifact: generated-config.yml

job: generate-configThe generated-config.yml is extracted from the artifacts and used as the configuration

for triggering the child pipeline.

In GitLab 12.9, the child pipeline could fail to be created in certain cases, causing the parent pipeline to fail. This is resolved in GitLab 12.10.

Nested child pipelines

- Introduced in GitLab 13.4.

- Feature flag removed in GitLab 13.5.

Parent and child pipelines were introduced with a maximum depth of one level of child pipelines, which was later increased to two. A parent pipeline can trigger many child pipelines, and these child pipelines can trigger their own child pipelines. It's not possible to trigger another level of child pipelines.

For an overview, see Nested Dynamic Pipelines.

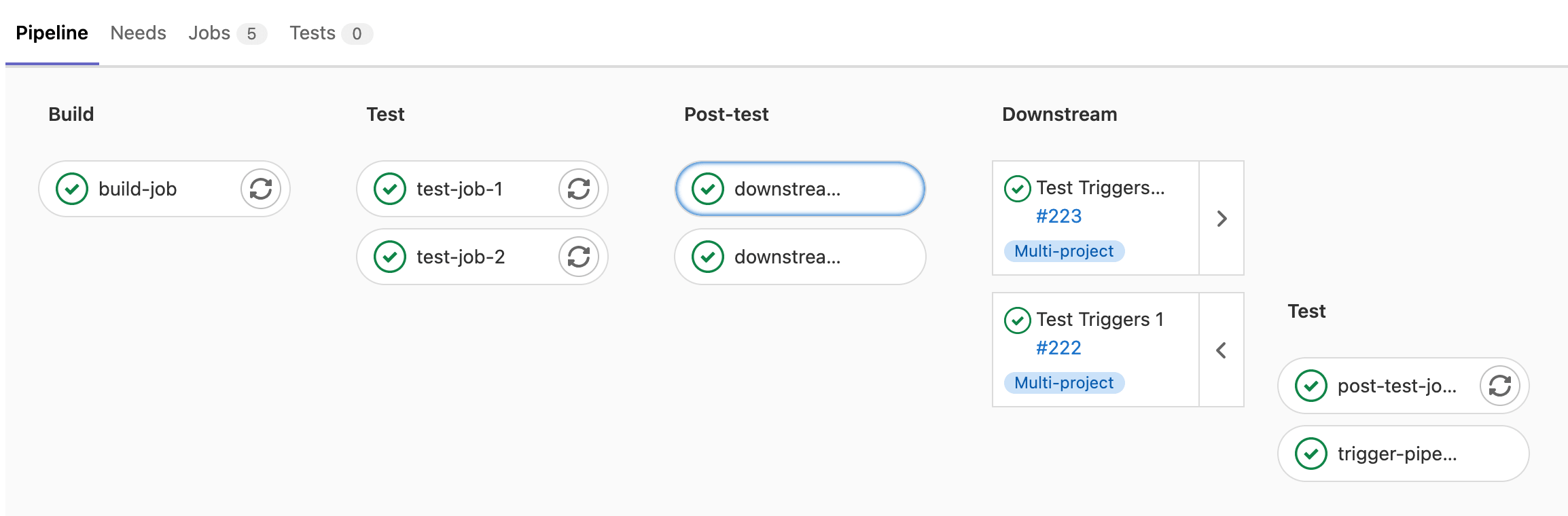

View a downstream pipeline

In the pipeline graph view, downstream pipelines display as a list of cards on the right of the graph.

Retry a downstream pipeline

- Retry from graph view introduced in GitLab 15.0 with a flag named

downstream_retry_action. Disabled by default.- Retry from graph view generally available and feature flag removed in GitLab 15.1.

To retry a completed downstream pipeline, select Retry ({retry}):

- From the downstream pipeline's details page.

- On the pipeline's card in the pipeline graph view.

Cancel a downstream pipeline

- Retry from graph view introduced in GitLab 15.0 with a flag named

downstream_retry_action. Disabled by default.- Retry from graph view generally available and feature flag removed in GitLab 15.1.

To cancel a downstream pipeline that is still running, select Cancel ({cancel}):

- From the downstream pipeline's details page.

- On the pipeline's card in the pipeline graph view.

Mirror the status of a downstream pipeline in the trigger job

- Introduced in GitLab Premium 12.3.

- Moved to GitLab Free in 12.8.

You can mirror the pipeline status from the triggered pipeline to the source trigger job

by using strategy: depend:

::Tabs

:::TabTitle Multi-project pipeline

trigger_job:

trigger:

project: my/project

strategy: depend:::TabTitle Parent-child pipeline

trigger_job:

trigger:

include:

- local: path/to/child-pipeline.yml

strategy: depend::EndTabs

View multi-project pipelines in pipeline graphs (PREMIUM)

When you trigger a multi-project pipeline, the downstream pipeline displays to the right of the pipeline graph.

In pipeline mini graphs, the downstream pipeline displays to the right of the mini graph.

Pass artifacts to a downstream pipeline

You can pass artifacts to a downstream pipeline by using needs:project.

-

In a job in the upstream pipeline, save the artifacts using the

artifactskeyword. -

Trigger the downstream pipeline with a trigger job:

build_artifacts: stage: build script: - echo "This is a test artifact!" >> artifact.txt artifacts: paths: - artifact.txt deploy: stage: deploy trigger: my/downstream_project -

In a job in the downstream pipeline, fetch the artifacts from the upstream pipeline by using

needs:project. Setjobto the job in the upstream pipeline to fetch artifacts from,refto the branch, andartifacts: true.test: stage: test script: - cat artifact.txt needs: - project: my/upstream_project job: build_artifacts ref: main artifacts: true

Pass artifacts from a Merge Request pipeline

When you use needs:project to pass artifacts to a downstream pipeline,

the ref value is usually a branch name, like main or development.

For merge request pipelines, the ref value is in the form of refs/merge-requests/<id>/head,

where id is the merge request ID. You can retrieve this ref with the CI_MERGE_REQUEST_REF_PATH

CI/CD variable. Do not use a branch name as the ref with merge request pipelines,

because the downstream pipeline attempts to fetch artifacts from the latest branch pipeline.

To fetch the artifacts from the upstream merge request pipeline instead of the branch pipeline,

pass this variable to the downstream pipeline using variable inheritance:

-

In a job in the upstream pipeline, save the artifacts using the

artifactskeyword. -

In the job that triggers the downstream pipeline, pass the

$CI_MERGE_REQUEST_REF_PATHvariable by using variable inheritance:build_artifacts: stage: build script: - echo "This is a test artifact!" >> artifact.txt artifacts: paths: - artifact.txt upstream_job: variables: UPSTREAM_REF: $CI_MERGE_REQUEST_REF_PATH trigger: project: my/downstream_project branch: my-branch -

In a job in the downstream pipeline, fetch the artifacts from the upstream pipeline by using

needs:project. Set therefto theUPSTREAM_REFvariable, andjobto the job in the upstream pipeline to fetch artifacts from:test: stage: test script: - cat artifact.txt needs: - project: my/upstream_project job: build_artifacts ref: $UPSTREAM_REF artifacts: true

This method works for fetching artifacts from a regular merge request parent pipeline, but fetching artifacts from merge results pipelines is not supported.

Pass CI/CD variables to a downstream pipeline

You can pass CI/CD variables to a downstream pipeline with a few different methods, based on where the variable is created or defined.

Pass YAML-defined CI/CD variables

You can use the variables keyword to pass CI/CD variables to a downstream pipeline,

just like you would for any other job.

For example, in a multi-project pipeline:

rspec:

stage: test

script: bundle exec rspec

staging:

variables:

ENVIRONMENT: staging

stage: deploy

trigger: my/deploymentThe ENVIRONMENT variable is passed to every job defined in a downstream

pipeline. It is available as a variable when GitLab Runner picks a job.

In the following configuration, the MY_VARIABLE variable is passed to the downstream pipeline

that is created when the trigger-downstream job is queued. This is because trigger-downstream

job inherits variables declared in global variables blocks, and then we pass these variables to a downstream pipeline.

variables:

MY_VARIABLE: my-value

trigger-downstream:

variables:

ENVIRONMENT: something

trigger: my/projectPrevent global variables from being passed

You can stop global variables from reaching the downstream pipeline by using the inherit:variables keyword.

For example, in a multi-project pipeline:

variables:

MY_GLOBAL_VAR: value

trigger-downstream:

inherit:

variables: false

variables:

MY_LOCAL_VAR: value

trigger: my/projectIn this example, the MY_GLOBAL_VAR variable is not available in the triggered pipeline.

Pass a predefined variable

You might want to pass some information about the upstream pipeline using predefined variables. To do that, you can use interpolation to pass any variable. For example, in a multi-project pipeline:

downstream-job:

variables:

UPSTREAM_BRANCH: $CI_COMMIT_REF_NAME

trigger: my/projectIn this scenario, the UPSTREAM_BRANCH variable with the value of the upstream pipeline's

$CI_COMMIT_REF_NAME is passed to downstream-job. It is available in the

context of all downstream builds.

You cannot use this method to forward job-level persisted variables to a downstream pipeline, as they are not available in trigger jobs.

Upstream pipelines take precedence over downstream ones. If there are two variables with the same name defined in both upstream and downstream projects, the ones defined in the upstream project take precedence.

Pass dotenv variables created in a job (PREMIUM)

You can pass variables to a downstream pipeline with dotenv variable inheritance

and needs:project.

For example, in a multi-project pipeline:

-

Save the variables in a

.envfile. -

Save the

.envfile as adotenvreport. -

Trigger the downstream pipeline.

build_vars: stage: build script: - echo "BUILD_VERSION=hello" >> build.env artifacts: reports: dotenv: build.env deploy: stage: deploy trigger: my/downstream_project -

Set the

testjob in the downstream pipeline to inherit the variables from thebuild_varsjob in the upstream project withneeds. Thetestjob inherits the variables in thedotenvreport and it can accessBUILD_VERSIONin the script:test: stage: test script: - echo $BUILD_VERSION needs: - project: my/upstream_project job: build_vars ref: master artifacts: true